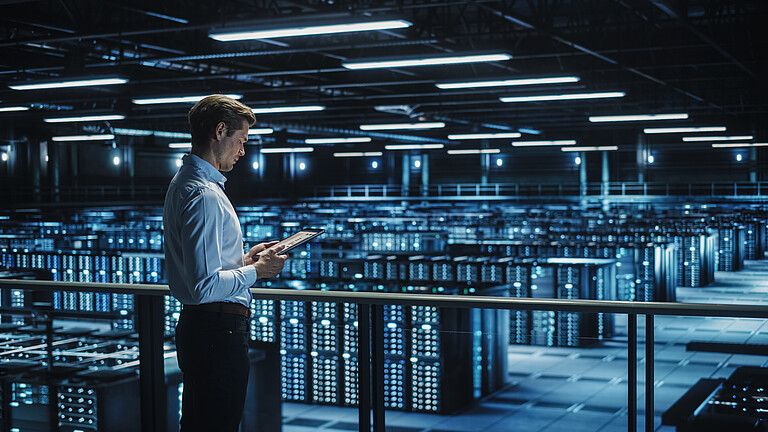

Sizing cooling for AI workloads correctly

Why IT applications generate different cooling profiles

AI has fundamentally changed the demands placed on data centers. While traditional IT systems with 70 kW per rack are already considered high-density environments, power consumption of 136 kW per rack is now often achieved in the AI sector. This development presents a challenge that is frequently overlooked during system design. This is because not all AI applications automatically generate the same load profile. Multi-GPU modules with shared memory and integrated onboard networks generate immense computing power, but the resulting thermal load varies greatly depending on the use case. This variability is not a side effect, but a key planning factor that some operators still underestimate.

The three most common AI application scenarios exhibit fundamental differences:

Chatbots and interactive applications operate in what is known as inference mode at a constant temperature within a high load range. The load behaves continuously and predictably. Although the chips constantly run at a high level while processing requests, the consistent load level enables stable control and reliable heat dissipation. From a climate control perspective, this is a favorable scenario, as the thermal load remains stable and the cooling systems can be designed for constant parameters.

Industry 4.0 applications, on the other hand, tend to exhibit a cyclical pattern in the medium to high load range. Here, intensive computing phases alternate with pauses, which is typical for production planning, quality control using AI-based image processing, or industrial prediction systems. These cyclical load patterns require adaptive control concepts, as the cooling systems must switch quickly between different power levels.

AI training, on the other hand, results in extreme temperature fluctuations that demand very fast response times. Training is highly iterative and data dependent. Depending on the size of the currently processed dataset, which model components are being trained, and how many GPUs are working in parallel, there are rapid spikes in thermal load. For example, a training algorithm could ramp up from 60 percent utilization to 100 percent and drop back down to a base load of 15 to 20 percent within a few milliseconds.

Why Standardized Cooling Concepts Are Usually Insufficient

Standard liquid cooling solutions that are not specifically designed for the dynamic load profiles of modern AI applications are already reaching their physical limits today. A core problem is often the lack of thermal reserves as well as a lack of part-load capability during extreme load swings. While constant loads in inference mode remain manageable, conventional liquid cooling systems fail due to the rapid spikes during training phases. They simply cannot provide the necessary flow rate quickly enough to effectively reduce the temperature.

The solution to safely absorb such peaks lies in system flexibility and a hybrid control strategy. A future-proof LC infrastructure must be able to switch virtually seamlessly between two worlds:

1. Inference mode: Here, dynamic cooling via temperature or flow control is crucial for maximum efficiency.

2. AI training: Here, static control based on constant delta-p values (differential pressure) is required.

Only differential pressure control guarantees a maximum flow rate that reliably handles even extreme load peaks, thereby ensuring the system is properly sized for peak demand. A modern liquid cooling system must master this transition between demand-driven dynamics and sustained peak performance so that it can be optimally utilized for both training and subsequent deployment (inference).

The practical implications for infrastructure planning

The different workload profiles have a direct impact on fundamental architectural decisions:

- For chatbot environments, the focus is on investing in reliability and energy efficiency.

- For Industry 4.0 scenarios, intelligent controls are necessary that understand process cycles and enable adaptive regulation.

- For AI training, thermal reserves are becoming a critical factor. Cooling systems and climate control components must be designed so that they never reach their limits, even during massive load spikes.

Expertise as a Success Factor

The key lesson in implementing AI infrastructures is to precisely understand the specific workload profiles of the planned application. Many operators fail in practical implementation because real-world load patterns often deviate significantly from theoretical calculations.

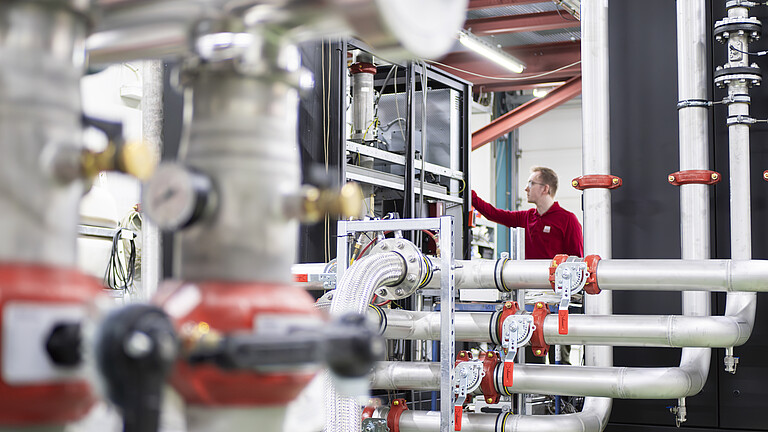

Only through pilot projects with scaled capacity can AI applications be tested under real-world conditions and cooling systems be precisely sized. The STULZ Test Center in Hamburg validates liquid-to-liquid systems for this purpose based on practical performance metrics. In addition to system tests under realistic scenarios, this also includes testing flow stability across multiple CDUs as well as continuous fluid monitoring. This empirical approach prevents costly planning errors and ensures compatibility with future hardware generations.

Conclusion: Differentiated planning for differentiated workloads

The days of “one-size-fits-all” cooling concepts are over. AI data centers require a deep understanding of the specific workloads that will run on-site. A successful strategy combines three elements: a thorough analysis of one’s own workload mix, a partnership with technology providers like STULZ who can test under real-world conditions, and a willingness to invest in pilot projects to scale gradually. Only this combination creates data centers that function optimally today and remain competitive tomorrow.